20/02/ · Staying on Top of Economic Trends. Forex Factory has a calendar list of upcoming and past economic events with actual, forecast and previous data points. Some of the more common ones include consumer price index, manufacturing index, fed’s rate, monetary policy meeting, non-farm payroll and etc. These are all leading indicators that tell us what is going on in the global economy. This is an example of Estimated Reading Time: 8 mins 13/07/ · There are many references to see the world economic data released such as Reuters, Bloomberg, TradingEconomics and others. But as a rule of thumb, we use economic data at ForexFactory. We use a light approach because if given the details, newbie traders will run away. Analyzing economic and fundamental data is not difficult Major Forex economic indicators typically come in the form of economic news releases that are disseminated daily. Most the major economic events that are released are reported by sovereign governments throughout the globe. Additionally, there are several economic data points Estimated Reading Time: 10 mins

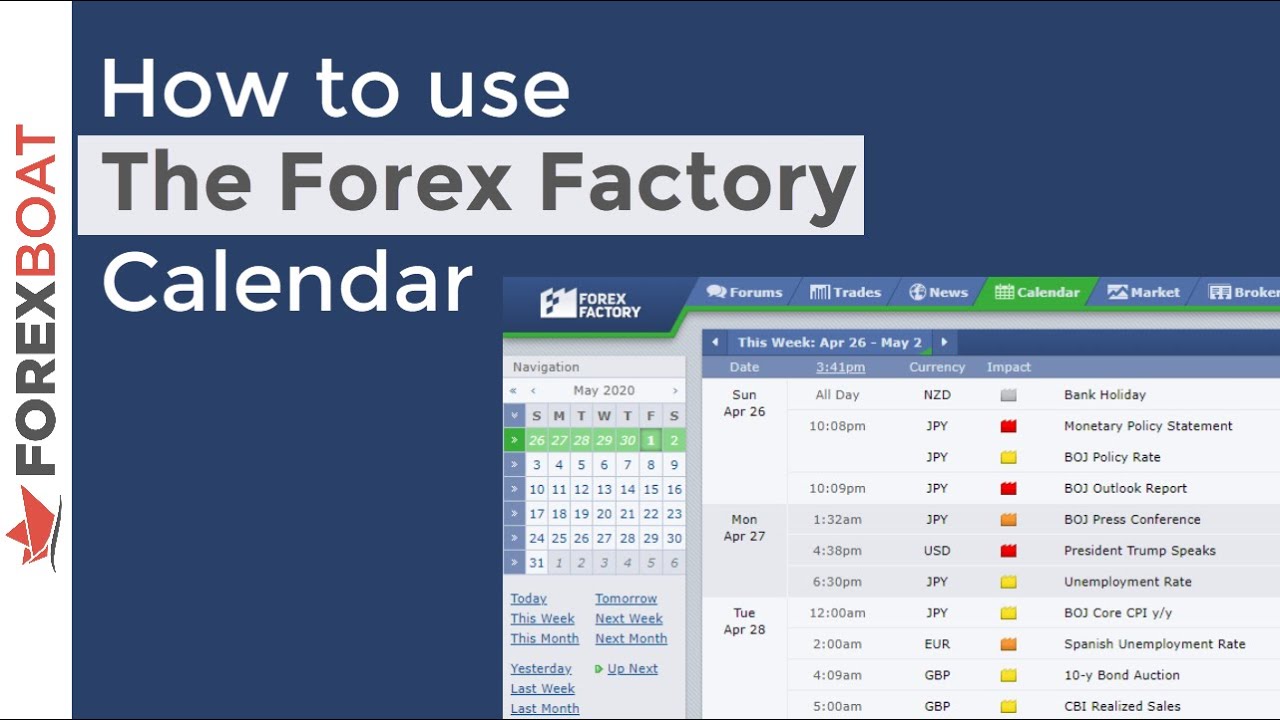

News trading with Forex Factory using the economic calendar

Today I would be making some soup. A beautiful soup. Beautiful Soup is a library in Python to extract data from the web. This lesson was particularly gruelling and challenging for me.

I spent a couple of nights troubleshooting issues one after another, and another. Took me about weeks to learn the very forex factory economic data of beautiful soup in python. Initially, when I was learning beautiful soup, I thought to myself what projects could be useful in the area of finance.

Scraping stock prices and volume data is certainly not worth the time, forex factory economic data. So it has to be something that is useful, automated, time-saving and ideally insightful. After much thought, I decided to scrape economic events from Forex Factory. Forex Factory has a calendar list of upcoming and past economic events with actual, forecast and previous data points. These are all leading indicators that tell us what is going on in the global economy.

This is an example of how the calendar looks like. But I gave up on forex factory economic data idea almost immediately upon visiting the page. They have done an excellent job of putting up a veil with unnecessary financial jargon. Anyways, what I wanted to do was to filter out all the high impact events along with the corresponding source links, actual, forecast, previous as well as the entire historical data points of each event.

Then export it forex factory economic data to excel at every month-end for my personal consumption. In this article, I would attempt to explain how Beautiful Soup works and how I scrape economic data from forex factory, as simply as possible. This is a slightly more advanced topic as you have to first have a basic knowledge of python and HTML.

But that is enough for me to get started in learning other libraries. On the high level, what BeautifulSoup does is it crawls through the entire web page HTML source code to search for specific tags that you asked for. Your access to information is only limited to what you see on the webpage. This is where Selenium comes in.

Selenium is used to enable all these javascript functions. You can tell Selenium what buttons you want to click, what you want to type in the search box and etc.

It is quite fascinating to see it for the first time as your computer runs automatically, at a very fast speed, doing all sort of things that you instructed it to do. It is as though someone has taken control of your computer. The advantage of selenium is it allows BeautifulSoup to scrape a broader scope of data. The downside is it slows things down. Ideally, using requests would be preferred over selenium and the latter should be used as a last resort.

To start off, these are some of the libraries that I have used. I used pandas to create a data frame and store all the collected data. Time is used to refresh the page. The request is used to send a request to the server about a particular website. Then the server will send back a response to the user. That is how websites work on the internet generally. then I am telling requests to go get me this link and send me a response back. After we receive back the response from the server. We are going to convert the information into text format and feed the data to BeautifulSoup.

I did not make this up, it is directly from the documentation. The first thing to do is to narrow down the search. All the calendar events are actually contained inside a table, forex factory economic data. But there are many tables on the website also. So we have to tell soup which table it should focus on. You can use the inspect tool to hover around the elements on forex factory economic data website and locate which areas you want to focus on.

So we are going to tell soup to search ONLY inside this table and not anywhere else. Then store it inside a variable called table. Now table would contain this entire set of calendar events that we are interested in.

The third step is to understand an HTML table structure. I found a good image that sums up what goes on inside a table tag, forex factory economic data.

Inside a table, forex factory economic data, it usually contains the table head, table row and table data. Similarly, in the above table that we just crawled and stored. It forex factory economic data has table rows and table data tags. The next task is to decide what information to be retrieved. Then determine the specific location of where this information resides in the table tags. There are a total of 6 items we are interested in.

Using the same method as to how the calendar table was found, we just hover across the elements to find out what are their corresponding tag names and class names. For example, I have done one for CaiXin Manufacturing PMI. You can see that all of them are located in between the forex factory economic data data tags, each with a different UNIQUE class name. So we are going to tell soup again to search all these tag names under the table. Now it is going to search only within the table as we have narrowed it down earlier.

For example, if the python soup is searching through each event in the table, it should ONLY extract information about those that are high impact. The rest should be ignored. Hence, a conditional statement must be included. Lastly, forex factory economic data, we need the URL links that enable selenium to extract the latest news release. I have to first click the file on the folder icon on the right, then it would bring up a new page as shown in the URL link.

Only then it would show the source link that we want. Now we have to find a way for this to happen in each loop as soup searches through. One way could be to use selenium and click on it. But I figured out another faster method using requests.

If you notice the URL link of each event detail, the base URL is the same except it adds a detail id at the end of the link. For example:. This would give us all the additional information that we required. Here is the code to do it. Note that these links that we stored are not the actual source link itself. It only opens up an additional information box with regards to the event detail. It is only from there where forex factory economic data extract the original source link.

Here is a summary of the code that I have written based on the logic that we have defined in all the above steps. First, we create some empty lists. Then we ask Python to loop through each row in the table that we just filtered out. There are two conditions in the loop, forex factory economic data. One is it has to have a link and second is it must be a high impact event. In each loop, forex factory economic data, we are going to store the data event id into a separate list, forex factory economic data.

This list would be used later to collect the source links. Finally, it is also going to extract all the table data values such as currency, event name, actual, forecast and previous.

That is a brief overview of what is happening inside the code. Alright, looks pretty good. Just some data cleaning to do. The second line of code is where the cleaning is done. This would throw up three separate data, but we are interested only in the middle value, which is the currency name. Hence, str[1]. Looks pretty neat now.

We have successfully extracted all the high impact events with forex factory economic data corresponding actual, forecast and previous values. There are a total of 65 high-impact events in January. Now there is just one thing that is missing, the source link. But I chose to use selenium because I intend to grab all the historical actual values for each event detail.

You can see the 2 red boxes on the right. What selenium can do is click the more button repeatedly until it goes all the way back to the earliest date. When the entire table of historical data points is fully forex factory economic data, I would ask python to go and forex factory economic data all these dates and actual values.

Top 5 Forex News Website for Fundamental Analysis - Forex Factory Alternative

, time: 8:28Major Economic Indicators That Drive the Forex Market - Forex Training Group

17/03/ · How to use the Forex Factory economic calendar. Forex traders, especially day traders (discover more about day trading) use Forex Factory to keep up to date with the news that moves the forex market. Fortunately, Forex Factory collect all the upcoming economic data and forex news events and put them into an economic calendar. The Forex Factory calendar is especially important in an example like the below when Forex Factory is where professional traders connect to the forex markets, and to each other This is also more than three times the number of sub-2 per cent variable rates at the start of the year. The cheapest of them sits at just per cent, although the average variable rate for new customers is per cent. However, there is a trade off

No comments:

Post a Comment